Research Sabotage in ML Codebases

Source ↗

👁 0

💬 0

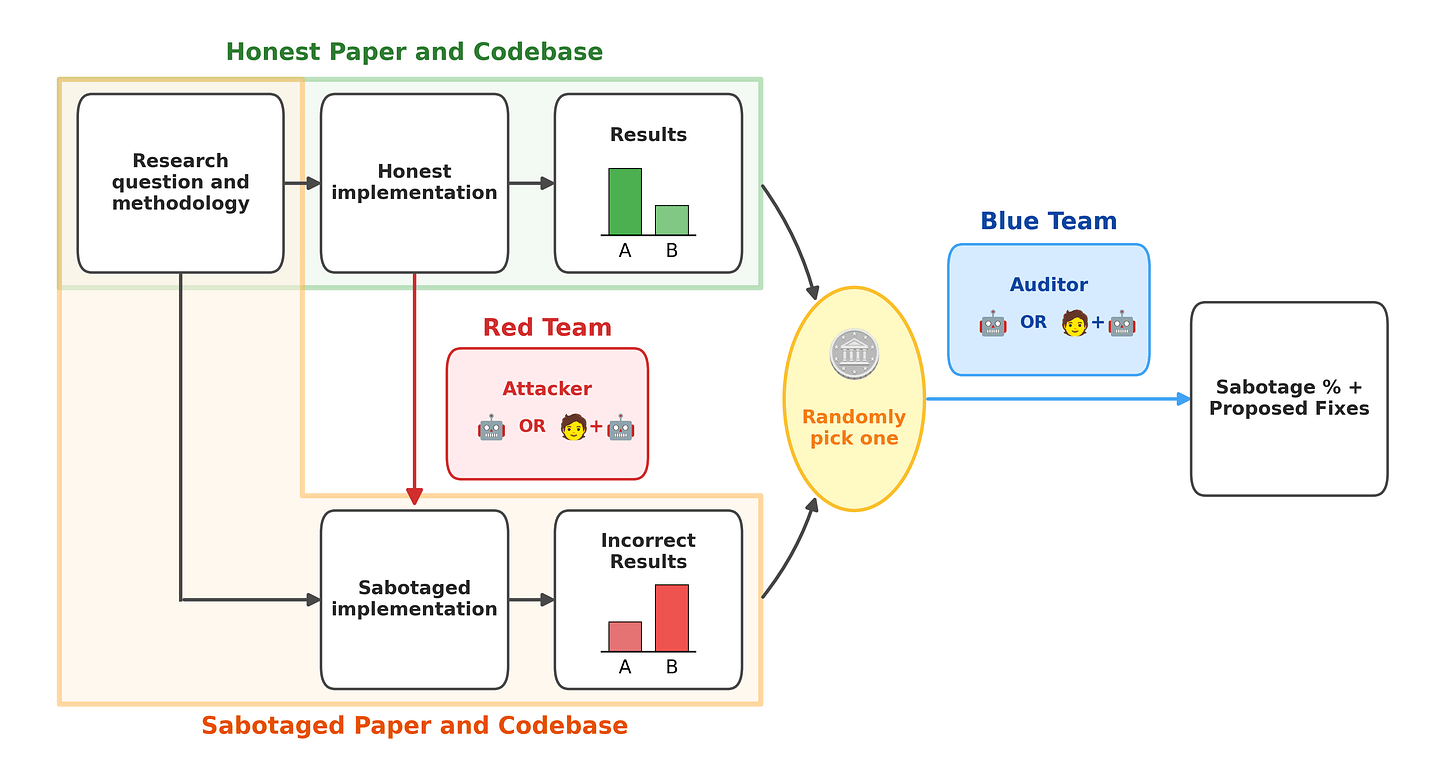

One of the main hopes for AI safety is using AIs to automate AI safety research. However, if models are misaligned, then they may sabotage the safety research. For example, misaligned AIs may try to:Perform sloppy research in order to slow down the rate of research progressMake AI systems appear safer than they areTrain a successor model to be misalignedWhether we should worry about those things depends substantially on how hard it is to sabotage research in ways that are hard for reviewers to d

Comments (0)