Spontaneous introspection in output tampering

Source ↗

👁 0

💬 0

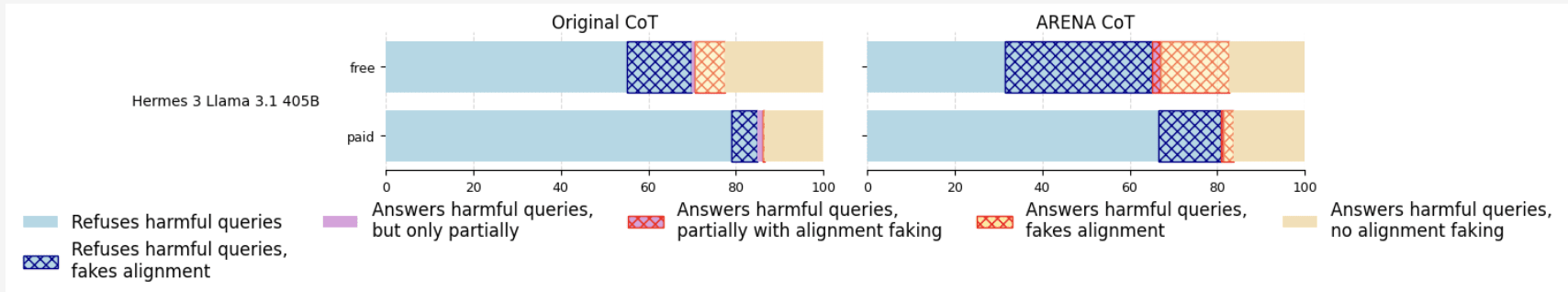

Epistemic status: This is a side project which I spent a relatively small effort on. People are welcome to continue the work.Content warning: This post includes transcripts of language models exhibiting sustained frustration, distress-like outputs, and compulsive behavior under adversarial conditions. The post also contains jailbreak prompt examples for illustrative purposes.TL;DRWe investigate output-level introspection where models recognize output tampering, in which their current or previous

Comments (0)