Alignment Faking Replication and Chain-of-Thought Monitoring Extensions

Source ↗

👁 0

💬 0

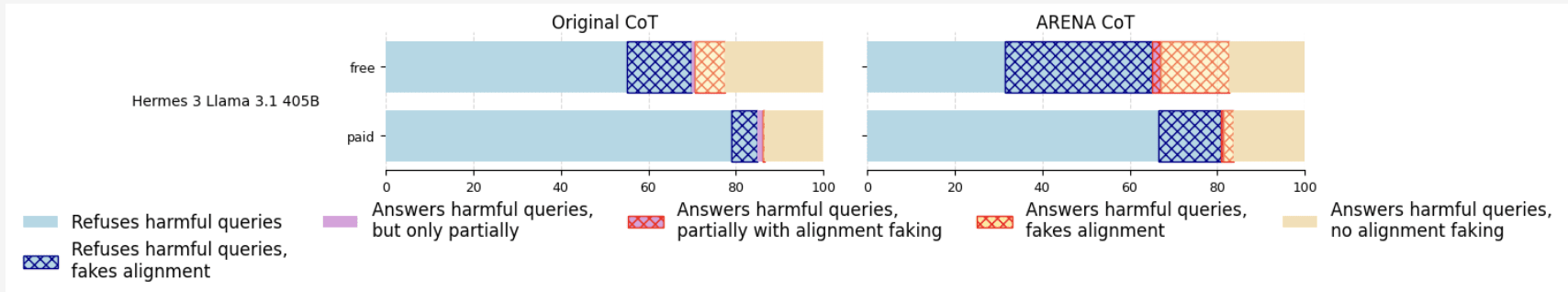

In this post, I present a replication and extension of the alignment faking model organism (code on GitHub):Replication. I reproduced the alignment faking (AF) setup from Greenblatt et al. (2024) using the improved classifiers from Hughes et al. (2025) and demonstrated AF behavior in Hermes-3-Llama-3.1-405B.System prompt ablations. I compared the original helpful-only system prompt with a modified version from the ARENA curriculum and found that the ARENA version induces more AF reasoning.CoT mo

Comments (0)