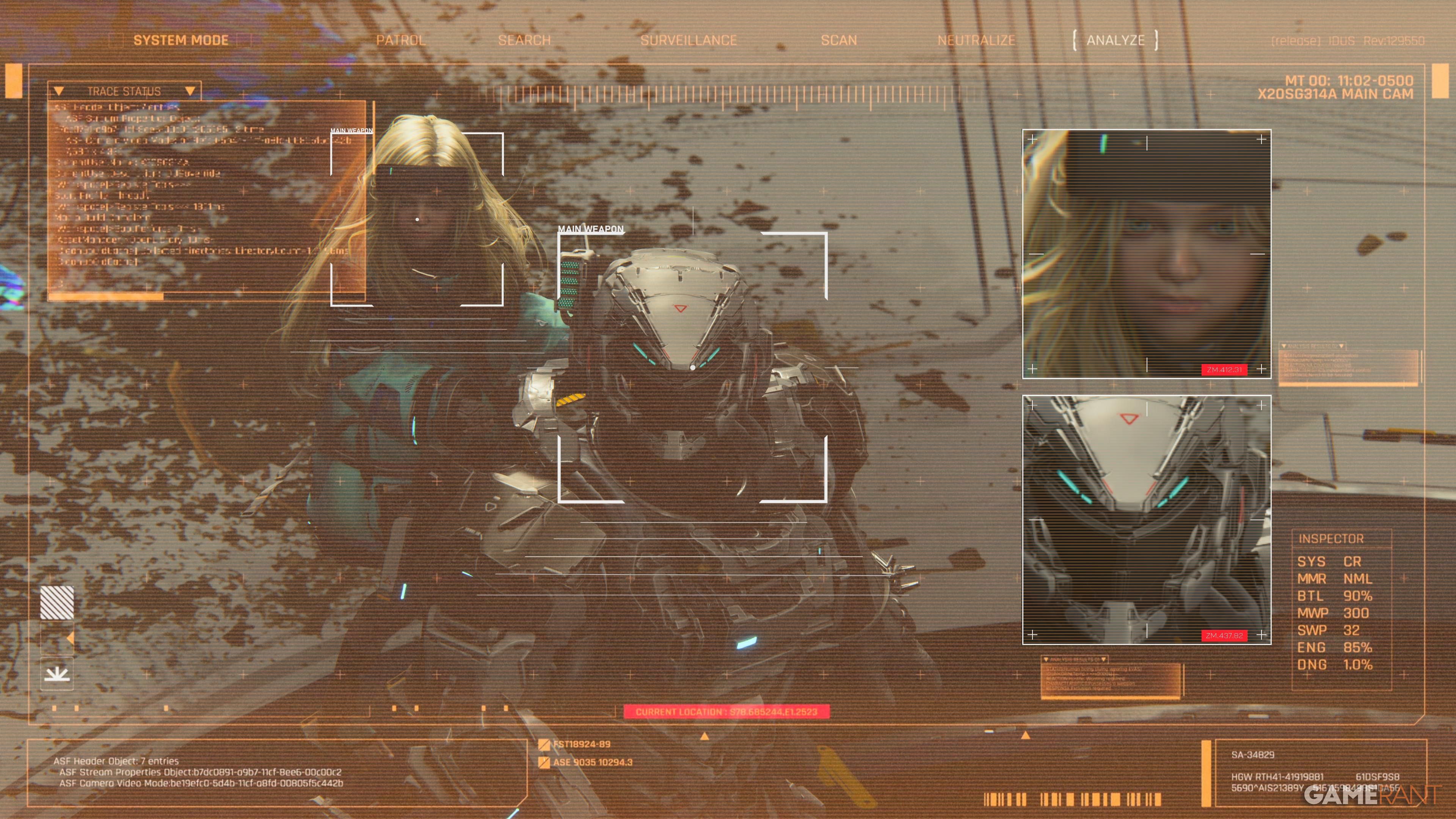

AI is 10 to 20 times more likely to help you build a bomb if you hide your request in cyberpunk fiction, new research paper says

Source ↗

👁 0

💬 0

In November 2025, a team of DexAI Icaro Lab, Sapienza University of Rome, and Sant'Anna School of Advanced Studies researchers published a study in which they were able to circumvent the safety guardrails of major LLMs by rephrasing harmful prompts as "adversarial" poems. This week, those same researchers have published a new paper presenting their Adversarial Humanities Benchmark, a broader assessment of AI security that they say reveals "a critical gap" in current LLM safety standards through

Comments (0)